Knuth is quoted as saying “premature optimization is the root of all evil,” which is why I’ve procrastinated so long before trying to speed up my 3D graphics code.

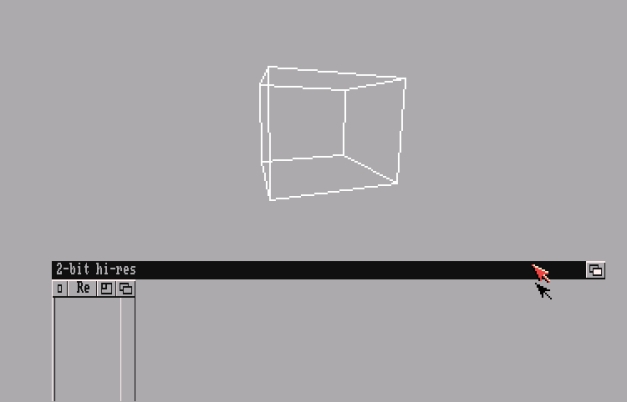

In my last exciting blog post, I made a cube spin on an Amiga 500 running at 7 MHz. The win here was correctness; I had demonstrated that my implementation of the algorithms of 3D rendering were correct. I could take a set of points, rotate and translate them in space, and then project them onto a 2D plane. No problem. Except.

It was too slow.

97 milliseconds of doom

With the first version of my code, I was getting a meager 10 FPS. Not that exciting, when you consider that I was only transforming 8 vertices and drawing 12 lines. I didn’t really expect my C code to run as fast as an Amiga demo written in 68000 assembly, but I figured I could do better than that.

My first step was to figure out where my time was being spent. The Amiga does have a high-resolution timer, and I used that to run a number of tests. This is an area where I’ve done formal research in the past, but for now I just wanted some quick-and-dirty average numbers. Here’s an example of what I found:

| Clear buffer |

6.8 ms |

| Update Transformations |

28.3 ms |

| Transform Vertices |

26.6 ms |

| Draw Lines |

4.3 ms |

| Swap Buffers |

31.0 ms |

| Total |

97.0 ms |

So there are two places where I’m spending a lot of time. The first is in the geometry calculations – creating transformation matrices and then transforming vertices. That’s not surprising – those functions involve a lot of multiplication of floating point numbers, and even a little division. The 68000 doesn’t have built-in support for floating point numbers, and my Amiga doesn’t have a math coprocessor (which, once upon a time, was a separate microchip sitting on the motherboard of your computer). It has to emulate floating point operations, and that’s really expensive.

(In fact, it’s such a big deal that the Amiga OS includes three different libraries of floating point operations, with different tradeoffs of speed and precision. For the record, my numbers above used the “Motorola fast floating point” libraries.)

The other place where I’m losing time is when I’m swapping buffers – 31 ms is a long time! Now, it’s important to understand what happens in that part of the code. My drawing routine is double-buffered – I’m drawing to one set of bitplanes while another set is being displayed. When I finish, I need to tell the graphics hardware to start displaying the newer frame of graphics. But that swap can only happen during the monitor’s video blank interval, which means that I might have to wait as much as 17 ms to swap buffers.

That’s still a lot less than 31 ms, so what’s going on? My screen code and double-buffering code all uses the Amiga’s high-level UI library, Intuition, so I suspect that’s where a lot of the overhead is coming from. If I were to take over the display and use the graphics.library functions directly, I might get better results.

Fixing the Math

I knew I was only going to have a couple more evenings before the end of January and the end of the Winter Warmup, so I had to pick which one of these problem areas to work on. I decided to focus on speeding up the geometry transforms, since I had some ideas I wanted to pursue and it seemed like the most generally applicable choice.

The first thing I did, and the hardest, was to rewrite my vertex and matrix operations to get rid of the floating-point math, replacing it all with fixed-point operations.

Fixed-point math is an approach to representing fractional numbers that used to be fairly popular when computers didn’t have floating-point units. The idea is that you multiply your numbers by a scaling factor so that they can be represented as integers.

Here’s an example. Say you have the numbers 3.4 and 2.2, and you want to represent them as fixed-point numbers with a scaling factor of 100. The numbers would be represented as 340 and 220. To add or subtract fixed-point numbers, you just add or subtract the representations, so 340 + 220 = 560, which is 5.6 when you divide it by the scale factor.

Multiplication and division are more complicated, since you’re also multiplying or dividing the scale factor, and you have to tread carefully to handle that without losing data to overflow or underflow. The multiplication algorithm I ended up with was to divide each number by the square root of the scale factor, and then multiply them together, like so: 340 x 220 => 34 x 22 = 748, which is 7.48 when you convert it back to a floating point number (and yes, 3.4 x 2.2 = 7.48. I checked).

Now all this multiplying and dividing by scale factors probably doesn’t sound very fast. But if your scaling factor is 65536 – i.e. 2^16 – then multiplying and dividing is just shifting the bits of your number to the left or right. I just baked these routines in a handful of C preprocessor macros that I could use when rewriting my matrix routines:

#define FIXSHIFT 16 // shift 16 bits = scale factor 65536

#define HALFSHIFT 8

// convert float to fix (and back)

#define FLOATTOFIX(x) ((int)((x) * (1<<FIXSHIFT)))

#define FIXTOFLOAT(x) ((float)(x) / (1<<FIXSHIFT))

// convert int to fix (and back)

#define INTTOFIX(x) ((x)<<FIXSHIFT)

#define FIXTOINT(x) ((x)>>FIXSHIFT)

// multiply and divide

#define FIXMULT(x,y) (((x)>>HALFSHIFT)*((y)>>HALFSHIFT))

#define FIXDIV(x,y) (((x)/(y>>HALFSHIFT))<<HALFSHIFT)

Whew! That was a lot of work. And the result of all that meddling with my mathematics? 12.8 FPS!

Okay, that doesn’t sound like much. But think about it this way – my geometry transformations went from taking 54.9 ms to about 34 ms. That’s pretty significant, I think.

Or just get rid of it

With my mind thoroughly in the matrix code, I started looking for other optimizations. I’d made my multiplications and divisions faster, but could I get rid of some of them entirely?

Every frame, I’m creating three different rotation matrices – one for each axis – and multiplying them together. I could, algebraically, work out what that resulting matrix should look like and just plug in my sine and cosine values for the amount of rotation. More generally, there are shortcuts you can take anytime you’re multiplying two affine transformation matrices (like rotations or translations) together. In that case, you know the bottom row of each matrix is [0 0 0 1], so there are a bunch of terms you can eliminate.

I did the algebra on paper, and then made a special function for multiplying affine transformation matrices together. That eliminated something like 56 fixed-point multiplications each frame. I ran some tests and found that gave me almost another 2 FPS.

Transcendental defenstration

After all this, I was still doing three cosine and three sine operations each frame. Transcendental floating-point operations are expensive. I couldn’t just throw them out the window, but instead I replaced the function calls with lookups in a pregenerated table.

I hadn’t separated out the trig functions when I was taking my performance measurements, so I wasn’t sure how much difference that would make. I was pleasantly surprised by my final result – 16.8 fps. That’s almost a 70% improvement over where I started a couple days ago.

By the way, all those measurements above were done at regular old 7 MHz speed. With my new ACA 500, the frame rate gets a small boost up to 18.6 fps. That just makes it all the more obvious that the current bottleneck is that buffer swapping time.

And we’re done

I’m pretty much out of time for the Retrochallenge Winter Warmup, so that’s where we’ll pause for now. The current framerate looks reasonably smooth, and the math is correct, so at least it’s a good starting point for future projects.

There are some more optimizations I could make on the geometry calculations side, but right now the time it takes to swap buffers is overshadowing that significantly.

And, of course, I’ve also got new hardware considerations. I want to do a lot more analysis with the ACA 500 in place on my A500, and get a better sense of how much difference it makes.

If I want to get really serious, I could do a lot better job gathering timing information. It would be interesting to see how much variation there is from frame to frame (rather than collecting averages over multiple frames like I did above), and also to see exactly what effect waiting for the vertical sync has on performance.

So there we are: It was a pretty fun Retrochallenge project, and it definitely exercised some brain muscles. And like any really interesting project, it’s not really done. It’s just time to decide what to do next.